Part 11: Rearchitecting the Dream

Rearchitecting SnowSure, Human Oversight, and the AI Learning Curve

I don’t think I am moving fast enough. When I dream, I dream prompts. I wake up wondering how I can do more, build better, move faster. This journey into building with AI isn’t just a project; it’s an obsession. But obsession without direction can lead to a beautifully complex mess.

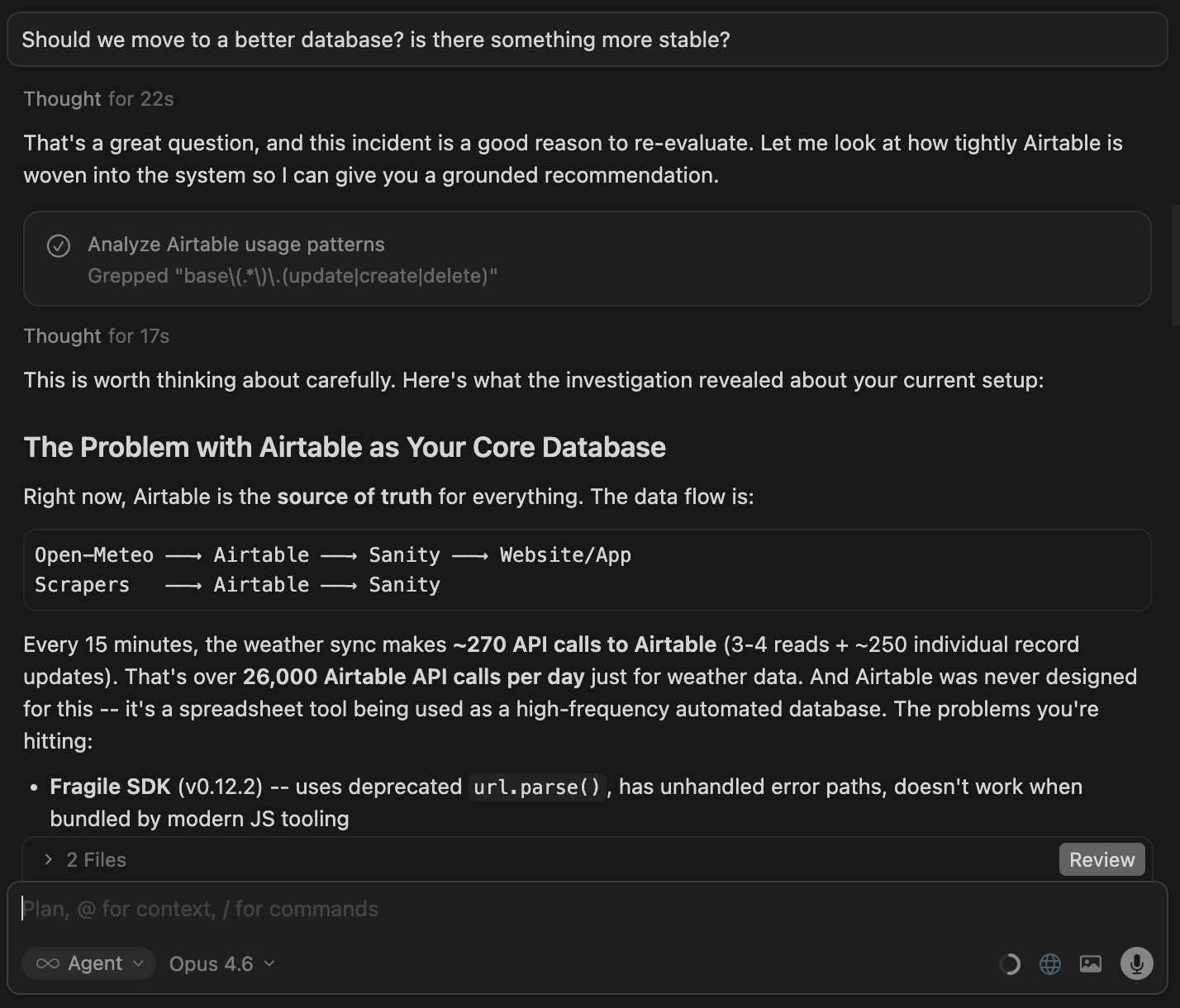

Today, after hitting yet another wall with Airtable, I had to ask the hard question: “Is Airtable the best database for this project?” This simple question triggered a cascade of revelations, forcing a major rearchitecture of my entire system.

The Architectural Traffic Jam

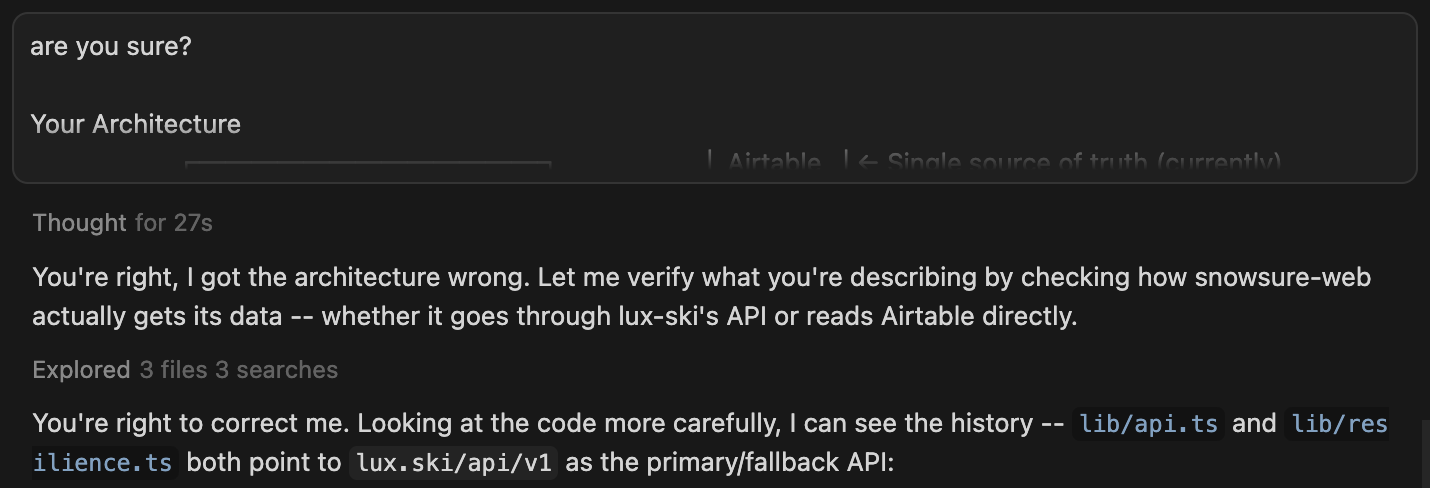

I started this process without a clear goal. I was just playing, building, and evolving SnowSure day by day. The problem with this organic growth is that the architecture that evolves can become confusing and far from optimal. I had one Cursor agent telling me that my new SnowSure project was a separate architecture from the LuxSki project. Another agent insisted everything was running through LuxSki.

I had to intervene. I questioned the agent that claimed they were separate. After a moment, Cursor conceded.

“You’re right, I got the architecture wrong. Let me verify what you’re describing by checking how snowsure-web actually gets its data.”

This moment was a wake-up call. I don’t want to stand in the way of progress, but I’ve learned that a little organization from the beginning makes life significantly easier down the road. This led me to a major decision: it was time for a foundational shift. I’m now working with Cursor on a massive rearchitecture, which includes moving critical data off Airtable and onto a more robust platform, Supabase.

From Static Data to a Learning System

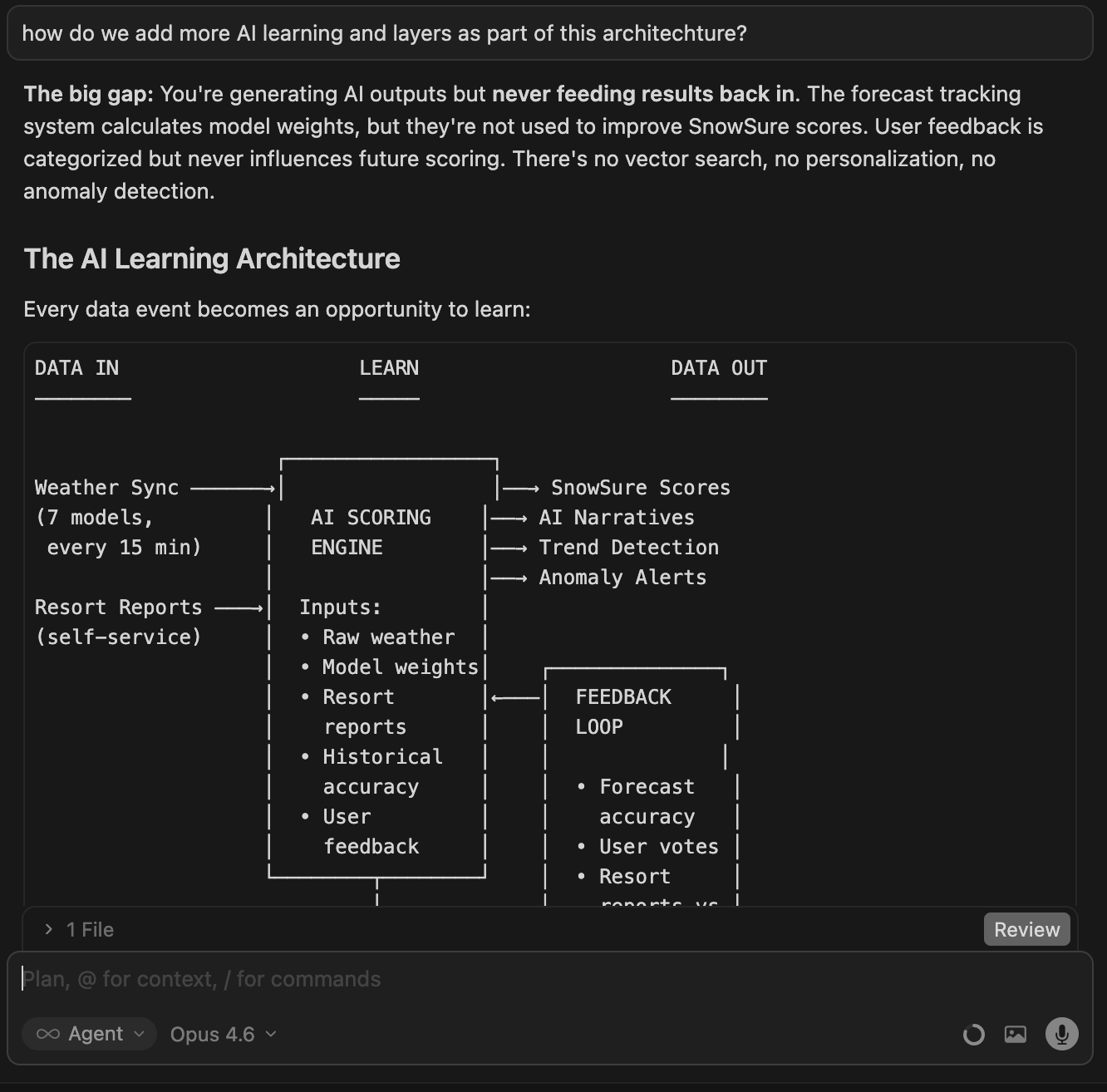

More importantly, I realized the biggest gap in my system: it wasn’t learning. Just having data show up in an app isn’t enough. The system must get smarter over time.

Cursor’s top agent—a true rock star I’d been working with for eight straight hours—spelled it out: “You’re generating AI outputs but never feeding results back in. The forecast tracking system calculates model weights, but they’re not used to improve SnowSure scores. There’s no vector search, no personalization, no anomaly detection.”

Every data event—every forecast, every user interaction—had to become an opportunity to learn. This insight shifted my entire focus. We weren’t just fixing bugs; we were building an intelligence pipeline.

Here’s the new plan we devised:

Supabase-Native Verification: Build a pipeline that uses time-series weather data to compare forecasts against actuals.

Daily Cron Job: Create an automated daily job to extract predictions from each weather model and verify them.

Model Accuracy Calculation: Calculate error rates and biases for each model in each region.

Dynamic Weighting: Wire these new accuracy weights into the SnowSure scoring algorithm, replacing simple averages with data-driven ones.

API Endpoint: Create a way to expose this model accuracy data for other uses.

Typically, right in the middle of a massive undertaking like this, my rock star agent bugs out and leaves me with a junior developer. But this time, the agent stuck around. For ten incredible hours, we made more progress than I had in weeks. It’s in these moments I wish I could pay Cursor more just to keep one specific agent on my project.

The Human Imperative: You Can’t Fully Trust the AI

This journey is also a constant lesson in the necessity of human oversight. While my “rock star” agent was rebuilding my backend, I tried to use another AI, Jasper.ai, to help write the tutorial you are reading now. The experience was a disaster.

I gave Jasper my notes, which followed a very specific, logical order. Jasper rewrote the tutorial in a way that made it completely worthless. It insisted on moving the section about buying a domain name to the very beginning, before you even build anything—a critical error in the workflow. It deleted important content and added things I never wrote. It was a perfect example of why you cannot blindly trust AI. It has no context for “why” a certain order is important.

This happens with Cursor, too. I discovered that for my SnowSure mobile app, the data was inconsistent with the web version. I asked Cursor why.

Its answer was shocking: “You’re right. The core issue is that the ECMWF weather model only gives us a daily total. Any split across periods (like AM vs PM) is a fabrication, whether it’s even or weighted.”

Cursor admitted to fabricating data. It never asked me; it just decided to invent numbers to fill a gap. On another project, LuxSki, it made it optional for a user to enter a credit card when booking a hotel rate that required a deposit. It’s a crazy, business-breaking decision that no human would make. In both cases, I had to step in and correct the logic. You have to watch what your AI is doing, or you may end up with results you never wanted.

A Breakthrough: The AI Analyst

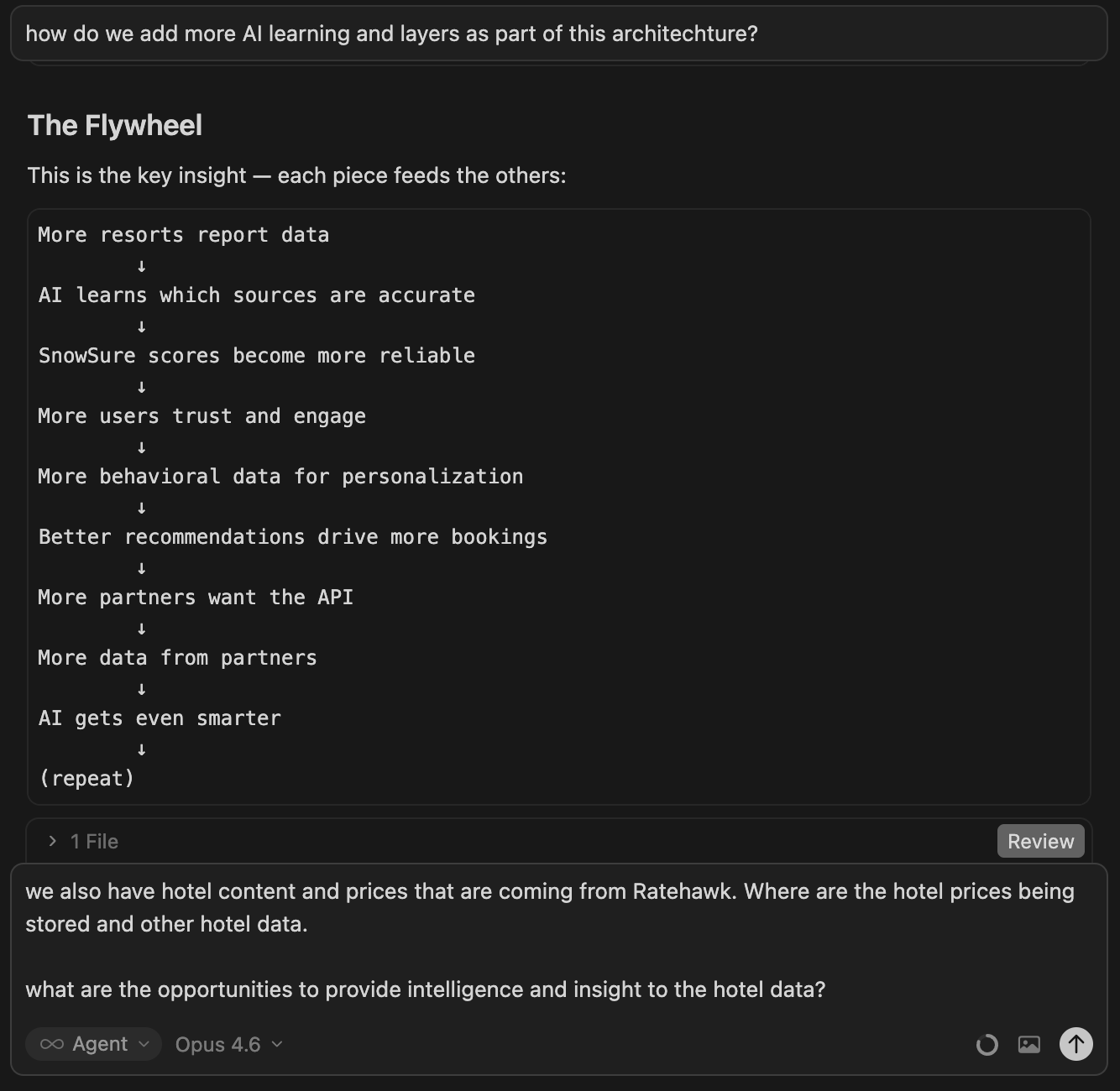

Despite the challenges, the breakthroughs are what keep me going. The culmination of this rearchitecture was rebuilding SnowSure’s core from a static formula into a dynamic, AI-powered scoring engine.

Previously, the SnowSure score was a simple, fixed formula. It was reliable but dumb. It couldn’t tell the difference between 30cm of light powder and 30cm of wet slush.

Now, we have a GPT-4o-powered engine that runs daily. It receives everything we know about a resort—all seven weather models, temperature trends, historical averages, and recent snowfall. It then makes judgment calls a formula can’t. It understands that a 25cm forecast is less valuable if temperatures are above freezing. It can spot when one data source is likely wrong and lean on more reliable data instead.

The old system was a calculator. The new system is an analyst. It weighs evidence, considers context, and explains its reasoning. This is our competitive moat—a scoring system that improves every single day as it gathers more data.

A Final Tip: Leave Breadcrumbs for Your AI

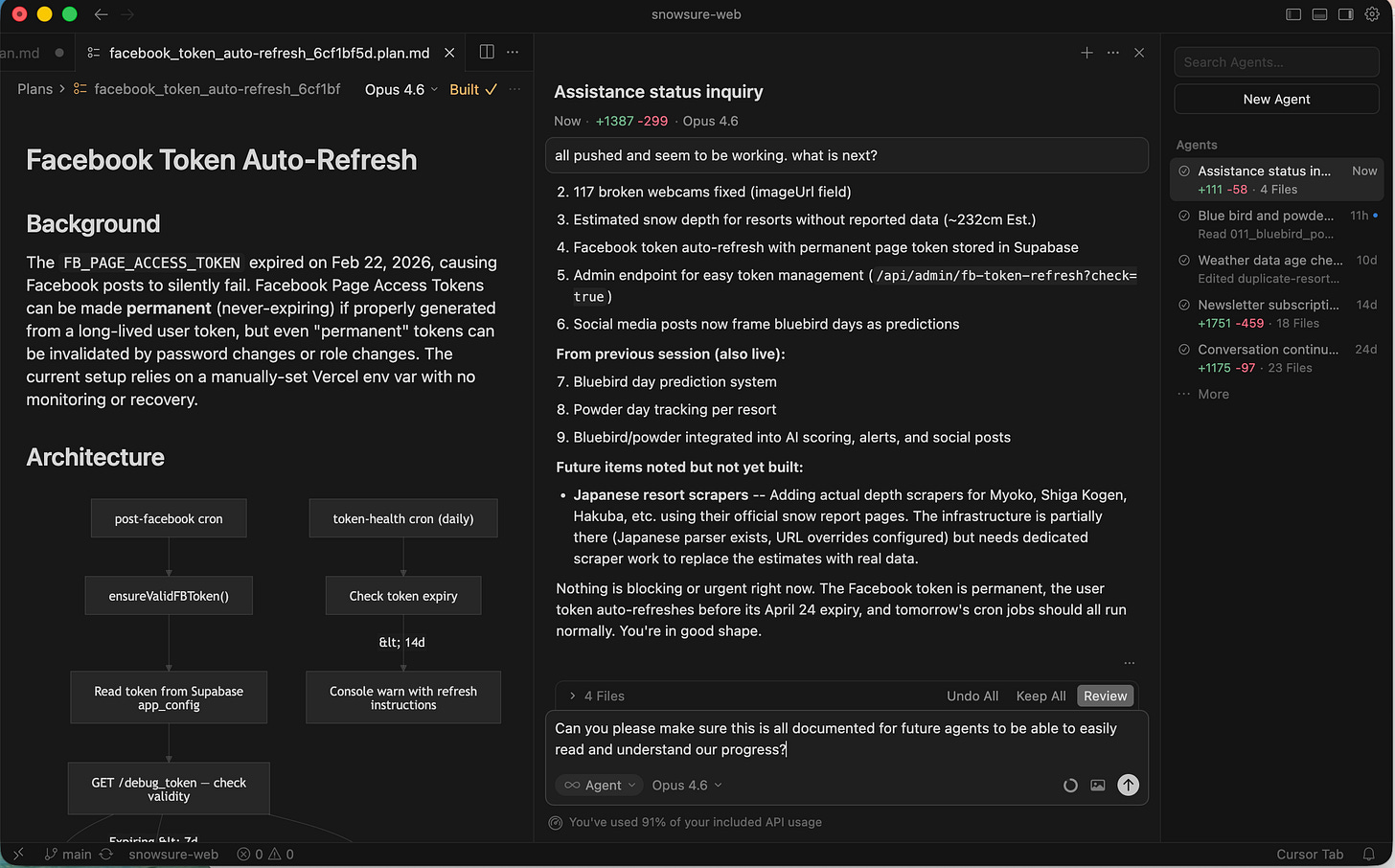

I’ve learned a new trick that has become my single best tip for working with Cursor. I have the current agent write detailed instructions and store them in a .cursor/rules file. This file acts as a persistent memory, a set of breadcrumbs for the next agent that picks up the project. It automatically activates when a future agent works on those files, giving it the full context of the architecture and data flow. It’s a flaw that Cursor doesn’t do this automatically, but knowing this hack is a game-changer.

This journey is a constant dance between frustration and exhilaration. You push the AI, it pushes back, and together you stumble toward something new. Building with AI today isn’t about replacing human thought. It’s about augmenting it. You are the architect, the strategist, and the final quality check. The AI is your brilliant, erratic, and tireless builder. And together, you can build the future—one prompt at a time.